Well, it's been a while since the last publication but here I am again with a new tool I wrote. I sometimes have to take a look on how web sites are performing so I need a tool to see if the configuration changes I make are taking effect and measure the performance differences. There are some tools like apache benchmark that can be used to benchmark web performance but they only accept a single URL to test, which is far away from a normal usage of a site.

So I wrote a tool that uses the access log file from apache or nginx and rips the URL paths to test so we can measure better how a "normal" usage of the site will behave with this or that configuration. It also reports some stats and plots a graph with the timings. To have an idea of what this tool can do, lets see its options.

root@dgtool:~# pywench --help

Usage: pywench [options] -s SERVER -u URLS_FILE -c CONCURRENCY -n NUMBER_OF_REQUESTS

Options:

-h, --help show this help message and exit

-s SERVER, --server=SERVER

Server to benchmark. It must include the protocol and

lack of trailing slash. For example:

https://example.com

-u URLS_FILE, --urls=URLS_FILE

File with url's to test. This file must be directly an

access.log file from nginx or apache.'

-c CONCURRENCY, --concurrency=CONCURRENCY

Number of concurrent requests

-n TOTAL_REQUESTS, --number-of-requests=TOTAL_REQUESTS

Number of requests to send to the host.

-m MODE, --mode=MODE Mode can be 'random' or 'sequence'. It defines how the

urls will be chosen from the url's file.

-R REPLACE_PARAMETER, --replace-parameter=REPLACE_PARAMETER

Replace parameter on the URLs that have such

parameter: p.e.: 'user=hackme' will set the parameter

'user' to 'hackme' on all url that have the 'user'

parameter. Can be called several times to make

multiple replacements.

-A AUTH_RULE, --auth=AUTH_RULE

Adds rule for form authentication with cookies.

Syntax:

'METHOD::URL[::param1=value1[::param2=value2]...]'.

For example: POST::http://example.com/login.py::user=r

oot::pass=hackme

-H HTTP_VERSION, --http-version=HTTP_VERSION

Defines which protocol version to use. Use '11' for

HTTP 1.1 and '10' for HTTP 1.0

-l, --live If you enable this flag, you'll be able to see the

realtime plot of the benchmark

Most of the parameters like the SERVER, CONCURRENCY, TOTAL_REQUESTS are probably well known or self-explanatory for you, but you can also see other parameters that are not so common, so let me explain them:

URLS_FILE: This is the access log file from nginx or apache server. So if you want to test your server, you will only have to download the access log and use it as the input file. Please note that it takes the 7th column of the log file as the URL path.

MODE: The URLs can be extracted from URLS_FILE randomly or in the order they appear. You can choose it with the MODE parameter.

REPLACE_PARAMETER: Sometimes you need to pass an specific parameter so the server will answer as if you were a normal user (maybe a session string, a user name, etc). If you use this option, any URL from URLS_FILE will be checked for the parameter you want to replace and if the parameter exists, its value will be replaced with the value you specify.

AUTH_RULE: Sometimes you need to authenticate to a server before starting the benchmark to ensure that you are treated as a normal user. With this option you can choose how to authenticate, pass the authentication options and, before the benchmark starts, PyWench will login into the website and save the cookie and this auth cookie will be used for all the requests.

HTTP_VERSION: You can use HTTP 1.0 or HTTP 1.1 for the benchmarks.

--live: This will show you a live plot of how well the server is doing serving the PyWench requests during the benchmark.

Well, lets see how it works with an example! In this example we will test our favourite server: example.com. It will be a basic usage case.

To start the benchmark we will need two things: the server URL (protocol and domain name) and the access.log file. In this example we will use https://www.example.com as server URL (to point out that it also works with HTTPS). We will start with 500 requests and a concurrency of 50.

root@dgtool:~# /pywench -s "https://www.example.com" -u access_log -c 50 -n 500

Requests: 500 Concurrency: 50

[==================================================]

Stopping... DONE

Compiling stats... DONE

Stats (seconds)

min avg max

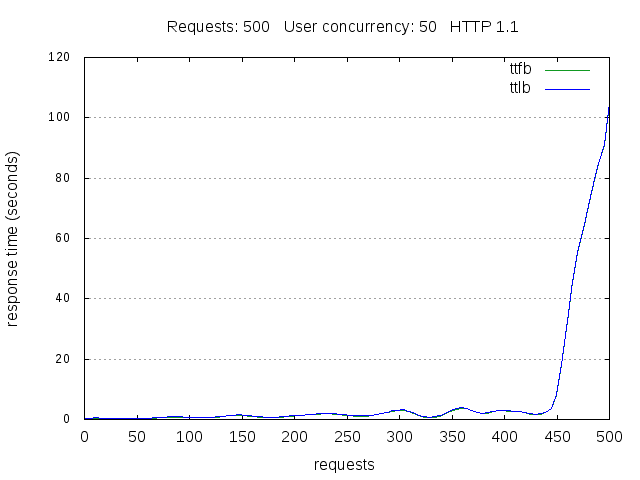

ttfb 0.15178 7.46277 103.63504

ttlb 0.15299 7.58585 103.63520

Requests per second: 6.591

URL min ttfb: (0.15178) /gallery/now/thumbs/thumbs_img_0401-1024x690.jpg

URL max ttfb: (89.01898) /gallery/then/02_03_2011_0007-2.jpg

URL min ttlb: (0.15336) /gallery/now/thumbs/thumbs_img_0401-1024x690.jpg

URL max ttlb: (90.55633) /gallery/then/02_03_2011_0007-2.jpg

NOTE: These stats are based on the average time (ttfb or ttlb) for each url.

Protocol stats:

HTTP 200: 499 requests (99.80%)

HTTP 404: 1 requests ( 0.20%)

Press ENTER to save data and exit.

Well, as you can see, we already have some stats in the output: there are some statistics regarding the timings for ttfb (time to first byte), ttlb (time to last byte) , the fastest and slowest URL and some error code stats.

There also were created 3 files in the working directory:

www.example.com_r500_c50_http11.log: Log file with all the gathered data. It is like a CSV with the start time, url, ttfb and ttlb of each request.

www.example.com_r500_c50_http11.stat: It contains a python dictionary with the stats reported in the command line.

www.example.com_r500_c50_http11.png: A plot of the benchmark. This plot is also what you see if you use the --live option. See the following image.

REQUIREMENTS

python-urllib3: I had to use urllib3 due to threading problems in urllib2 (threading safeness and python...)

python-gnuplot

gnuplot-x11: It is important to have the x11 package installed so live plotting works.

So, if you are in a Debian/Ubuntu system: apt-get install python-urllib3 python-gnuplot gnuplot-x11

UPDATE: Check the last post with the latest modifications: https://www.treitos.com/blog/posts/pywench-huge-improvements.html

You can access this tool clicking this URL: https://github.com/diego-XA/dgtool/tree/master/pywench As always, comments are welcome so if you have any question, found a bug or have a suggestiong, please let me know.